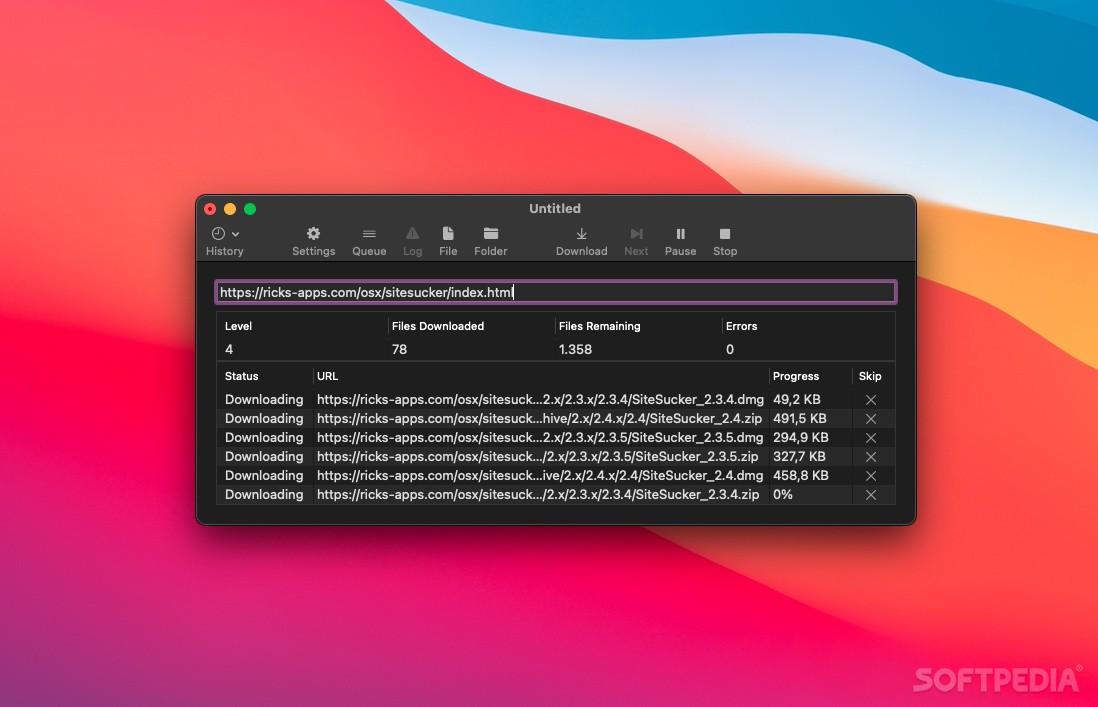

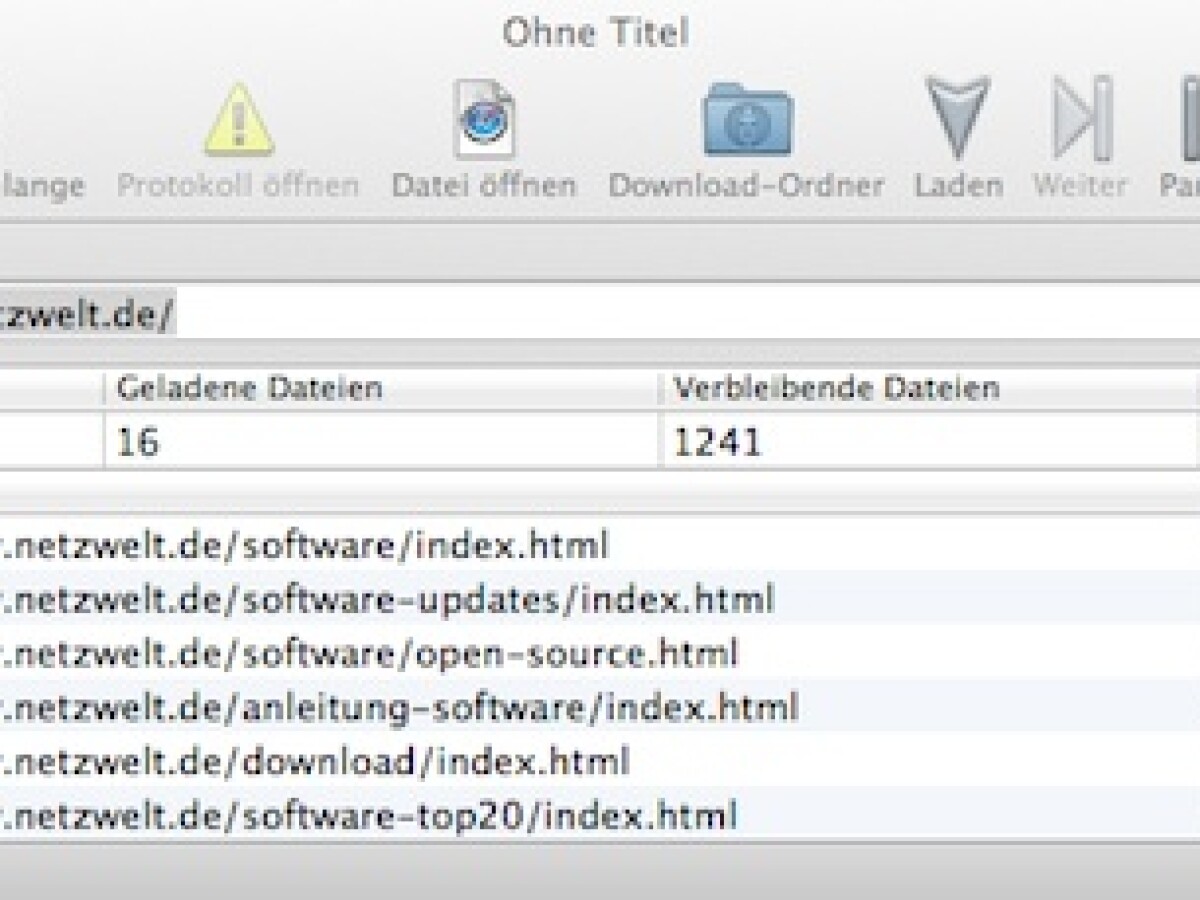

It can also drive SiteSucker using a manually produced list. SiteSucker can also be controlled by AppleScript and there’s a utility called SuckList that creates “lists of numerically indexed URLs and drive SiteSucker to download the files in the list. When you open the document later, you can restart the download from where it left off by pressing the Resume button. If SiteSucker is in the middle of a download when you choose the Save command, SiteSucker will pause the download and save its status with the document. This allows you to create a document that you can use to perform the same download whenever you want. You can save all the information about a download in a document. By default, SiteSucker "localizes" the files it downloads, allowing you to browse a site offline, but it can also download sites without modification. SiteSucker can be used to make local copies of Web sites. Just enter a URL (Uniform Resource Locator), press return, and SiteSucker can download an entire Web site. … the site's Web pages, images, backgrounds, movies, and other files to your local hard drive, duplicating the site's directory structure. Some more research revealed a free OS X and iOS app called SiteSucker that turned out to be just what I needed. As I mainly use OS X, I wanted an easier solution. ” if you’re trying to get the job done quickly. This is because for the version that runs on OS X, BSD, and Linux (called WebHTTrack) you’ve got to compile the code or, for Mac, have MacPorts installed (which also requires Xcode to be installed)either of which can send you down “ a maze of twisty little passages, all alike. HTTrack is great, it's got lots of useful features including sophisticated file type download options and it’s easy to install … at least, easy under Windows (where it’s known as WinHTTrack).

HTTrack is fully configurable, and has an integrated help system. HTTrack can also update an existing mirrored site, and resume interrupted downloads. Simply open a page of the "mirrored" website in your browser, and you can browse the site from link to link, as if you were viewing it online. HTTrack arranges the original site's relative link-structure. This will download the whole website for offline reading.… allows you to download a World Wide Web site from the Internet to a local directory, building recursively all directories, getting HTML, images, and other files from the server to your computer.For this example, we downloaded the popular website, Brain Pickings. Finally, type in this command and hit Enter.The Terminal will download the tool in a few minutes. Also ensure your user agent string matches, as sometimes sessions are blocked if the user agent string is changed.In Chrome, open Dev Tools, then login to the. It will ask for your Ubuntu password (if you've set one).Launch the Terminal and type the following command: sudo apt-get install httrack.to Copy Code (Any Device) 2 Using SiteSucker to Clone a Site (Mac OS) 3 Using HTTrack to. If you are an Ubuntu user, here's how you can use HTTrack to save a whole website: Upload Both Files to the Root Cloning a site Log in to cPanel. Once everything is downloaded, you can browse the site normally, simply by going to where the files were downloaded and opening the index.html or index.htm in a browser. Adjust parameters if you want, then click on Finish.You can also store URLs in a TXT file and import it, which is convenient when you want to re-download the same sites later. Select Download website(s) for Action, then type each website's URL in the Web Addresses box, one URL per line.Give the project a name, category, base path, then click on Next.Click Next to begin creating a new project.How to Download Complete Website With HTTrack

Click Copy in the toolbar to start the process.Navigate to File > Save As… to save the project.Play around with Project > Rules… ( learn more about WebCopy Rules).Change the Save folder field to where you want the site saved.Navigate to File > New to create a new project.How to Download an Entire Website With WebCopy One project can copy many websites, so use them with an organized plan (e.g., a "Tech" project for copying tech sites). This makes it easy to re-download many sites whenever you want each one, in the same way every time. The interesting thing about WebCopy is you can set up multiple projects that each have their own settings and configurations. Then you can use the configuration options to decide which parts to download offline. As it finds pages, it recursively looks for more links, pages, and media until the whole website is discovered. WebCopy by Cyotek takes a website URL and scans it for links, pages, and media.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed